A Guide to the Mental Shortcuts That Shape Our Thinking

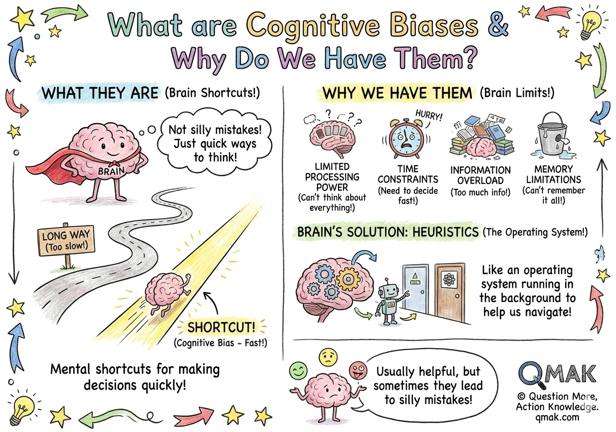

Cognitive biases are systematic patterns of deviation from rationality in judgment and decision-making. They’re not character flaws or signs of poor intelligence: they’re universal features of human cognition that evolved to help us survive. These mental shortcuts allow us to process information quickly and efficiently in a complex world where we face too much information, too little time, and limited mental resources.

Think of cognitive biases as the brain’s operating system.

They run in the background, influencing every decision we make, from what we buy at the grocery store to how we interpret the news.

While these shortcuts often serve us well, they can also lead us astray, especially in our modern world where the challenges we face are very different from those our ancestors encountered on the savannah.

Every cognitive bias exists for a reason, primarily to save our brains time or energy. The human brain, despite being remarkably powerful, has significant limitations:

To cope with these constraints, our brains have developed shortcuts: heuristics that help us navigate the world without becoming paralyzed by analysis. These shortcuts work well enough most of the time, which is why they’ve persisted through evolution. However, they can also introduce systematic errors into our thinking.

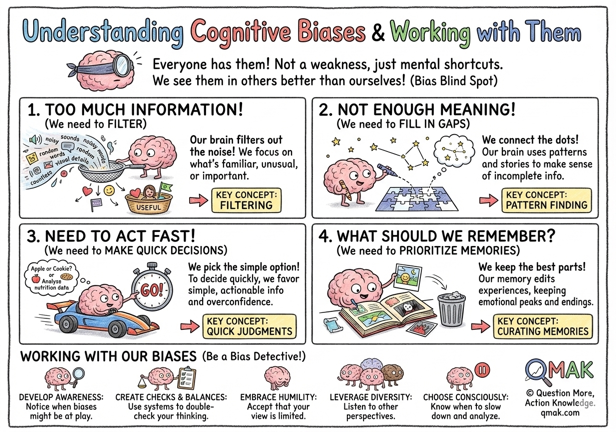

It’s crucial to understand that everyone has cognitive biases.

They’re not a sign of weakness or stupidity. From Nobel Prize-winning scientists to everyday decision-makers, we all use these mental shortcuts. The key difference isn’t whether we have biases (we all do), but whether we’re aware of them and can recognize when they might be influencing our judgment.

Interestingly, we also suffer from a “bias blind spot”: we’re much better at recognizing biases in others than in ourselves.

This is why external feedback and systematic decision-making processes can be so valuable.

Every cognitive bias exists as an attempt to solve one of these four fundamental challenges:

In every moment, our senses are bombarded with far more information than we can consciously process.

The sounds around us, the feeling of our clothes, the countless visual details in our environment: if we tried to consciously attend to everything, we’d be overwhelmed.

To cope, our brains have developed sophisticated filtering mechanisms that automatically decide what’s worth our attention.

These filters prioritize information that confirms what we already believe, that we’ve seen before, that stands out as unusual, or that seems immediately relevant to our goals. While this filtering is essential for functioning, it means we’re always working with an incomplete picture of reality, and our filters can cause us to miss important information that doesn’t fit our expectations.

Even after filtering, the information that reaches our conscious awareness is often ambiguous, incomplete, or contradictory.

We rarely have all the facts we need to fully understand a situation, yet we need to make sense of the world to navigate it effectively. Our brains solve this problem by automatically filling in gaps with assumptions, finding patterns (even where none exist), and creating coherent narratives from scattered data points. We draw on stereotypes, past experiences, and general rules to construct meaning from limited information.

This meaning-making ability is remarkable: it allows us to function with incomplete data. But it can also lead us to see connections that aren’t there, to overgeneralize from limited examples, and to create false narratives that feel completely real to us.

Life requires constant decision-making, and we rarely have the luxury of unlimited time to deliberate.

From evolutionary dangers that required split-second reactions to modern challenges like navigating traffic or responding in conversations, we need to act quickly and decisively. To enable rapid decision-making, our brains favor simple, actionable information over complex, nuanced understanding.

We’re biased toward overconfidence (which enables action), we prefer immediate rewards over future benefits, and we stick with default options to avoid the complexity of choice. These tendencies help us avoid “analysis paralysis” and take necessary action, but they can also lead to impulsive decisions, short-term thinking, and resistance to beneficial changes.

Our brains have limited storage capacity and retrieval capability, so we can’t remember everything we experience.

To manage this constraint, our memory systems actively edit and curate our experiences, keeping what seems most important and discarding or compressing the rest. We tend to remember the peak emotional moments and endings of experiences, while forgetting duration. We generalize specific events into broader patterns and rules. We constantly update our memories based on new information and current beliefs.

This selective memory system helps us learn from experience and avoid information overload, but it also means our memories are far less reliable than they feel. We forget important details, create false memories, and reinforce our existing beliefs by selectively remembering confirming evidence.

Understanding cognitive biases isn’t about eliminating them (that’s impossible). Instead, it’s about:

The goal isn’t to become a purely rational being. Our biases often serve us well. The goal is to become more conscious of how our minds work, so we can make better decisions when it matters most.

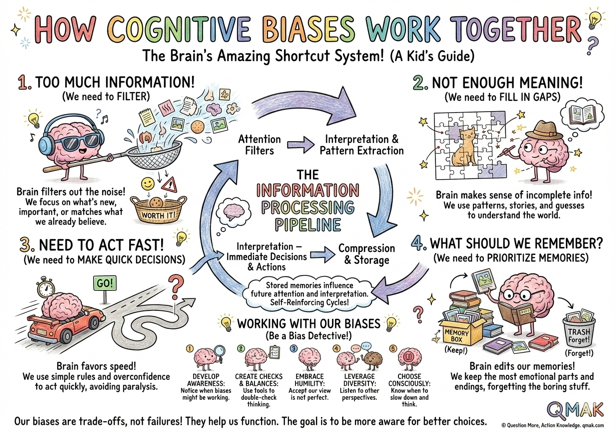

These four categories of cognitive biases don’t operate in isolation.

They work together as an integrated system that helps us navigate a complex world with limited cognitive resources. Understanding how they interact reveals the deeper logic of human cognition:

The Information Processing Pipeline: Information enters through our attention filters (Part 1), gets interpreted and meaningful patterns extracted (Part 2), influences immediate decisions and actions (Part 3), and finally gets compressed and stored for future use (Part 4). Each stage shapes what reaches the next, creating a highly personalized version of reality.

Self-Reinforcing Cycles: These biases create feedback loops. We notice information that confirms our beliefs (Part 1), interpret ambiguous data as supporting those beliefs (Part 2), act confidently on those interpretations (Part 3), and then remember selectively in ways that strengthen the original beliefs (Part 4). This is why beliefs and worldviews are so resistant to change.

Adaptive Trade-offs: Each bias represents a trade-off between competing needs. We can’t process everything, so we filter. We can’t store everything, so we compress. We can’t wait for certainty, so we jump to conclusions. These aren’t failures but features that allow us to function. The key is recognizing when these usually-helpful shortcuts are leading us astray.

They evolved to help us survive by making quick decisions with limited information.

In ancestral environments where information was scarce, threats were immediate, and social groups were small, these shortcuts were highly adaptive. They allowed our ancestors to act quickly, learn from experience, and cooperate effectively.

The problem isn’t the biases themselves but the mismatch between the environment they evolved for and our modern world of information overload, abstract risks, and global interconnection.

Knowing about biases doesn’t make you immune, but it can help you recognize when you might be affected.

Bias operates largely below consciousness, often persisting even when we know about it. However, awareness allows us to build in checks and balances, seek outside perspectives, and recognize situations where our intuitions are likely to mislead.

The goal isn’t to eliminate biases but to work with them more skillfully.

What helps in one situation may hurt in another.

The optimism bias that helps entrepreneurs persist might harm realistic planning. The availability heuristic that helps us avoid repeated dangers might make us fear rare risks. Context matters enormously.

The key is developing judgment about when to trust our cognitive shortcuts and when to override them with more deliberative thinking.

Every human brain uses these shortcuts; they’re universal features of human cognition.

From Nobel laureates to newborns, we all filter information, seek patterns, jump to conclusions, and edit memories. Recognizing this universality promotes both humility about our own limitations and empathy for others’ mistakes.

We’re all doing the best we can with brains designed for a different world.

Multiple biases often compound to influence our decisions.

Real-world thinking involves cascades of biases reinforcing each other. Confirmation bias affects what we notice, which influences how we interpret information, which shapes our decisions, which get encoded in memory selectively. Understanding these interactions is crucial for recognizing how powerfully our cognition shapes our reality.

Single biases are manageable; it’s their combination that creates our personal versions of reality.

The human mind is not a broken machine that needs fixing, but an adapted system doing its best in a world it wasn’t designed for. By understanding our cognitive biases, we can work with our minds rather than against them, making better decisions while remaining compassionate about our shared human limitations.